How Defined.fi Architected Real-Time DeFi

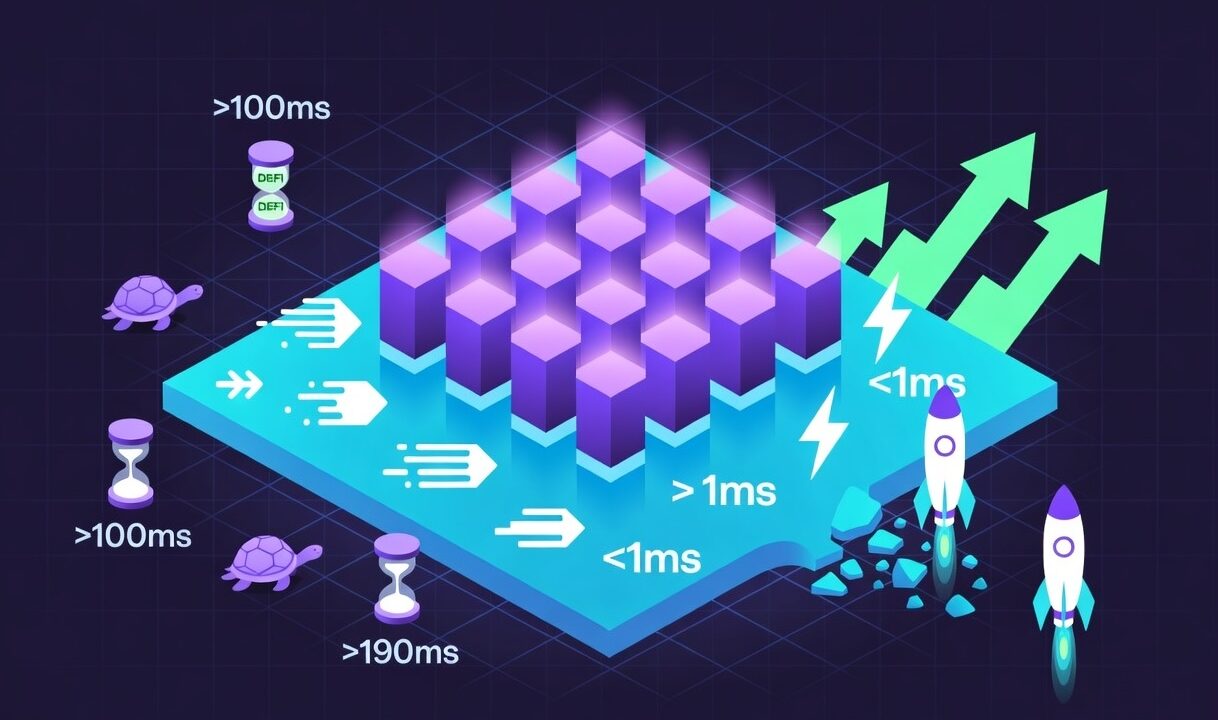

In the rapidly maturing world of decentralized finance, the difference between a highly profitable trading strategy and a catastrophic loss is rarely measured in days or even hours. It is measured in milliseconds. As institutional capital continues to flow into Web3, the tolerance for clunky, delayed user experiences has vanished. Modern traders expect the decentralized ecosystem to perform with the same blistering speed as traditional centralized exchanges like Nasdaq or the New York Stock Exchange. Achieving this real-time performance on top of distributed blockchain networks however is a monumental engineering challenge. And this is where Defined comes in.

At the heart of Defined.fi’s technical approach is an architecture built by co-founder and system architect Braden Simpson alongside his engineering team, including Derek Binnersley on DevOps infrastructure and Nathan Lambert on front-end systems. This internal data engine they built led to Codex creation, a strategic pivot targeting institutional B2B players in Defi. Together, they systematically dismantled the barriers to real-time data delivery, solving one of the industry’s most persistent problems: the latency moat.

This deep dive explores the mechanics of blockchain data indexing, the limitations of legacy systems, and the architectural decisions that enable the Defined platform, and its foundational Codex API, to deliver sub-second on-chain data to developers and traders worldwide.

The RPC Bottleneck: Defining the “Latency Problem”

To understand the magnitude of the engineering solution, one must first understand the depth of the problem. For years, the standard method for interacting with a blockchain involved querying a Remote Procedure Call (RPC) node, essentially a server running the blockchain’s client software, allowing external applications to read the current state of the network or submit new transactions.

The Polling Dilemma

In the early days of DeFi, developers built decentralized applications by having the frontend constantly poll these RPC nodes for updates. A user’s wallet or trading interface would ask the node “What is the price of Ethereum now?” and a few seconds later, it would ask again.

This architecture created a severe latency problem. Standard blockchain queries are inherently slow because public RPC nodes are frequently congested. When network activity spikes, like during a highly anticipated token launch or a period of intense market volatility, these nodes throttle requests or drop them entirely.

The Cost to Professional Traders and UX

For a retail user checking their portfolio balance, a three-second delay is a minor annoyance. For a professional trader, a market maker, or an algorithmic trading bot, a three-second delay renders the data virtually useless. By the time a standard RPC node returns the price of a volatile asset, arbitrage bots operating directly at the blockchain level, and often utilising Maximal Extractable Value, or MEV, strategies, have already capitalised on the discrepancy. The trader acting on delayed data is essentially trading against ghosts.

Furthermore, this latency severely hampers broader user experience. Web2 applications have trained consumers to expect instantaneous feedback. When an interface hangs while waiting for blockchain data, user trust erodes. The Defined engineering team recognised early that relying on raw RPC polling was a dead end. To build a genuinely professional financial platform, they had to bypass the bottleneck entirely.

Indexing the Impossible: How Defined Sorts On-Chain Chaos

If querying an RPC node is like asking a librarian to slowly read you a single page of a constantly changing book, indexing is the process of instantly scanning, categorising, and memorising the entire library.

The Sheer Volume of Events

The scale of blockchain data is staggering. Every second, across dozens of active Layer 1 and Layer 2 networks – Ethereum, Arbitrum, Solana, Base, and many others – thousands of blocks are produced. Embedded within these blocks are millions of raw events: token transfers, liquidity pool deployments, smart contract executions, and intricate decentralized exchange routing paths.

This data is naturally messy. It exists in complex hexadecimal formats designed for machine efficiency, not human readability.

Extract, Transform, Serve

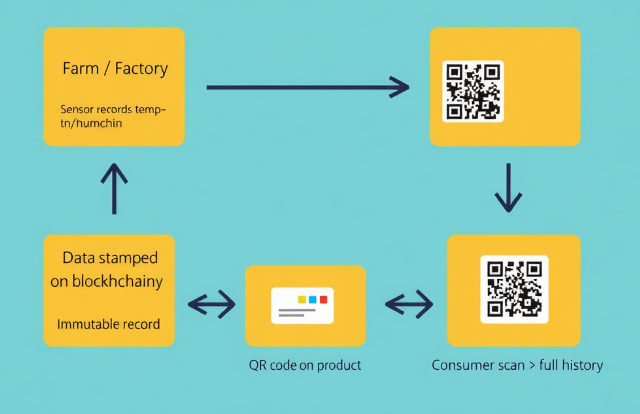

To serve this data in milliseconds, the Defined infrastructure performs a relentless, real-time Extract, Transform, and Load (ETL) process.

Extract: The system listens to the mempool, where pending transactions sit before confirmation, and to newly minted blocks across dozens of chains simultaneously.

Transform: The raw hexadecimal logs are instantly decoded. The system calculates derived metrics on the fly, translating a complex multi-hop swap on Uniswap into a clean, human-readable US dollar price.

Serve: Finally, this refined data is pushed to the end-user or developer before the next block is finalised.

Handling Network Reorganisations

Complicating this further is the reality of chain reorgs. Occasionally, blockchain networks experience micro-forks where the truth of the ledger shifts slightly before reaching finality. An effective indexing architecture cannot simply ingest data linearly; it must be deeply state-aware, capable of rolling back and recalculating historical data when a network reorg occurs. Building an engine that can sort, clean, and verify this chaotic stream of information without losing fidelity requires elite systems engineering, and it is the kind of problem that only reveals its full complexity under sustained live-environment pressure.

The Defined Stack: Prioritising Sub-Second Delivery

The Defined.fi stack was built to treat data delivery speed as the primary engineering constraint. Based on public documentation and the structure of Defined’s SDKs, the architecture relies on several advanced paradigms to achieve genuine real-time performance.

WebSocket Dominance Over HTTP REST

While HTTP REST APIs are the standard for most web applications, they require a new connection to be established for every single data request, and that is a significant overhead in high-frequency environments. The Defined architecture leans heavily on WebSocket connections and GraphQL subscriptions, which create a persistent, open tunnel between the Defined indexing servers and the client. When a liquidity pool updates on-chain, the Defined server instantly pushes that data down the tunnel. The client doesn’t need to ask for it; it simply receives it the moment it becomes available.

In-Memory Processing and Global Edge Networks

To deliver data globally in sub-second timeframes, the architecture cannot rely on traditional disk-based databases. Even fast SSD reads introduce unacceptable latency for real-time pricing. The Defined stack utilises in-memory data storage for its most frequently requested data – real-time prices of active tokens, and states of high-volume liquidity pools, enabling retrieval times measured in microseconds rather than milliseconds.

A globally distributed edge network handles geographic distribution, ensuring that a developer or trader in Tokyo is not waiting for data to travel from a server in Virginia. Data is cached and served from the point of presence closest to the requester.

Unified GraphQL Schema

A significant architectural decision in the Defined stack is its unified GraphQL schema. Instead of forcing clients to make multiple round-trip requests to assemble a complete picture with one endpoint for token price, another for metadata, and a third for historical chart data, GraphQL allows the client to specify a precise, complex data payload in a single query. The backend aggregates and returns it in one motion, substantially reducing network overhead and simplifying the integration burden for developers building on top of the API.

Dev-Ex: The Codex SDK

Building a fast backend is only half the engineering problem. If external developers cannot integrate that data cleanly into their own applications, the infrastructure’s value is trapped. The Codex API and its accompanying SDK address this through deliberate attention to developer experience.

Frictionless Integration

Historically, building a crypto application meant assembling a patchwork of tools: one provider for RPC node access, another for historical charts, a third for token metadata. Each integration brought its own schema, its own failure modes, and its own maintenance burden. The Codex SDK abstracts this entirely. A developer installs a single package and gains access to cross-chain pricing, liquidity data, wallet attribution, and historical analytics through a consistent interface. What previously represented months of backend engineering can be integrated in a working afternoon.

Type Safety and Predictability

In financial software, the cost of a runtime error is not just an inconvenient bug report, it can be a material loss. The Codex SDK is heavily typed using TypeScript, giving developers compile-time error checking and robust auto-completion. If a developer queries a field that doesn’t exist, their editor flags it before the code reaches production. This is not a convenience feature; in high-stakes financial environments, it is a reliability guarantee.

Empowering the Next Generation of dApps

By removing the data infrastructure burden from development teams, the SDK frees engineering capacity for the work that actually differentiates products: better user experiences, novel financial instruments, improved security design. It transforms the Codex API from a data service into a foundational layer, the substrate on which the broader ecosystem builds.

The Competitive Edge: Speed as an Uncopyable Moat

In software, features get copied. A polished user interface can be replicated by a competitor in weeks. Reliable, low-latency infrastructure cannot be forked.

Understanding the Competitive Landscape

The market for blockchain data APIs is not empty, and any honest analysis of Codex’s position requires acknowledging who else occupies it. Established players including The Graph, Alchemy, QuickNode, and Moralis each serve distinct segments of this market.

The Graph’s decentralised indexing protocol is well-suited to censorship-resistant data access and has a strong developer community, but its architecture introduces query latency and complexity that high-frequency trading applications cannot absorb. Alchemy and QuickNode are excellent at their core function as reliable RPC node access, but do not natively provide the enriched, aggregated financial data that trading and analytics applications actually require: USD pricing, liquidity pool analytics, wallet-level attribution, and cross-chain aggregation. Moralis offers developer tooling across multiple chains but similarly does not deliver the depth of financial intelligence at the latency levels that professional trading infrastructure demands.

It is in the gap between raw node access and genuine financial intelligence – enriched, standardised, and delivered at sub-second speed across 80-plus networks, that the Codex architecture operates without a direct equivalent.

The Compounding Advantage of Live Operation

The deeper competitive moat, however, is not a technical specification that can be read off a comparison chart. It is temporal. Years of live, high-volume operation under real market conditions – such as absorbing edge cases, handling chain reorganisations, stress-testing during peak volatility events, and onboarding new networks as they launch – produce an infrastructure that a new entrant cannot replicate by writing equivalent code. A competitor beginning today does not just need to build a faster indexer. They need to compress years of live-environment refinement into a compressed timeline, without the user base that generates the query volume that reveals the edge cases in the first place.

The Institutional Standard

Traditional financial institutions beginning to engage seriously with on-chain activity are not looking for the most feature-rich interface. They are looking for the provider with the strongest reliability record, the most consistent data fidelity, and the clearest path to Service Level Agreements and guaranteed uptimes. Technical architecture is not evaluated in isolation; it is evaluated in the context of operational history.

By prioritising architecture over aesthetics from the beginning, the Defined engineering team has built something more durable than a popular product. They have built the kind of infrastructure whose value compounds as the market it serves matures.

Conclusion

Solving the latency moat is the critical unlock for the next phase of Web3 adoption. The transition from delayed, RPC-dependent applications to real-time, instantly responsive financial tooling is what will finally close the gap between the expectations of institutional capital and the reality of decentralised infrastructure.

The architecture that Braden Simpson and the Defined engineering team have built – with Simpson leading system design, Binnersley building the operational backbone, Lambert shaping the developer-facing layer, and the broader team pressure-testing the whole under live conditions, is the product of years of deliberate choices made in exactly that direction. Speed, predictability, and developer accessibility were not features added to a finished product. They were the constraints that shaped every decision from the beginning.

Defined.fi is not simply participating in the evolution of the market. It is building the engine that makes that evolution possible.

Disclaimer: This article is for educational purposes only. It does not constitute financial or investment advice. The DeFi ecosystem evolves rapidly; readers are encouraged to verify current developments independently.